Why secured networked AI agents are the operational layer financial services has been waiting for.

Most organizations adopting AI in regulated environments are doing it backwards. They start with the model and work outward, hoping compliance will follow. It rarely does.

The fundamental challenge is not whether AI can generate content, write reports, or produce imagery. It can. The challenge is whether every output can withstand scrutiny from compliance teams, clients, and regulators. In financial services, healthcare, and legal practice, the answer to that question determines whether AI is an asset or a liability.

The Compliance Problem Nobody Talks About: Can Agentic AI do the work in a way that every stakeholder in the chain can verify.

Traditional AI pipelines are monolithic. A single system ingests data, processes it, and produces output. When something goes wrong; a licensing violation, a hallucinated claim, a brand-inconsistent asset – the effort required to identify where the failure occurred can be substantial.

Agentic Architecture: Specialized Agents, Governed Workflows

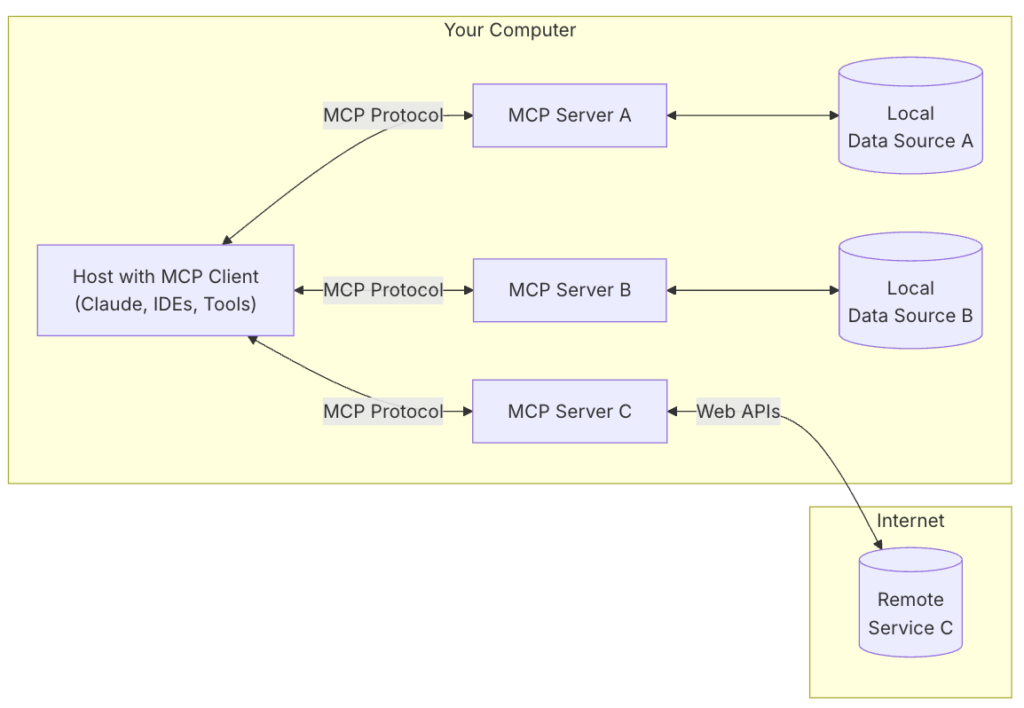

Agentic pipelines take a fundamentally different approach. Instead of a single monolithic system, the work is distributed across specialized agents, each responsible for a discrete function. An orchestration layer coordinates handoffs, enforces sequencing, and maintains the audit trail.

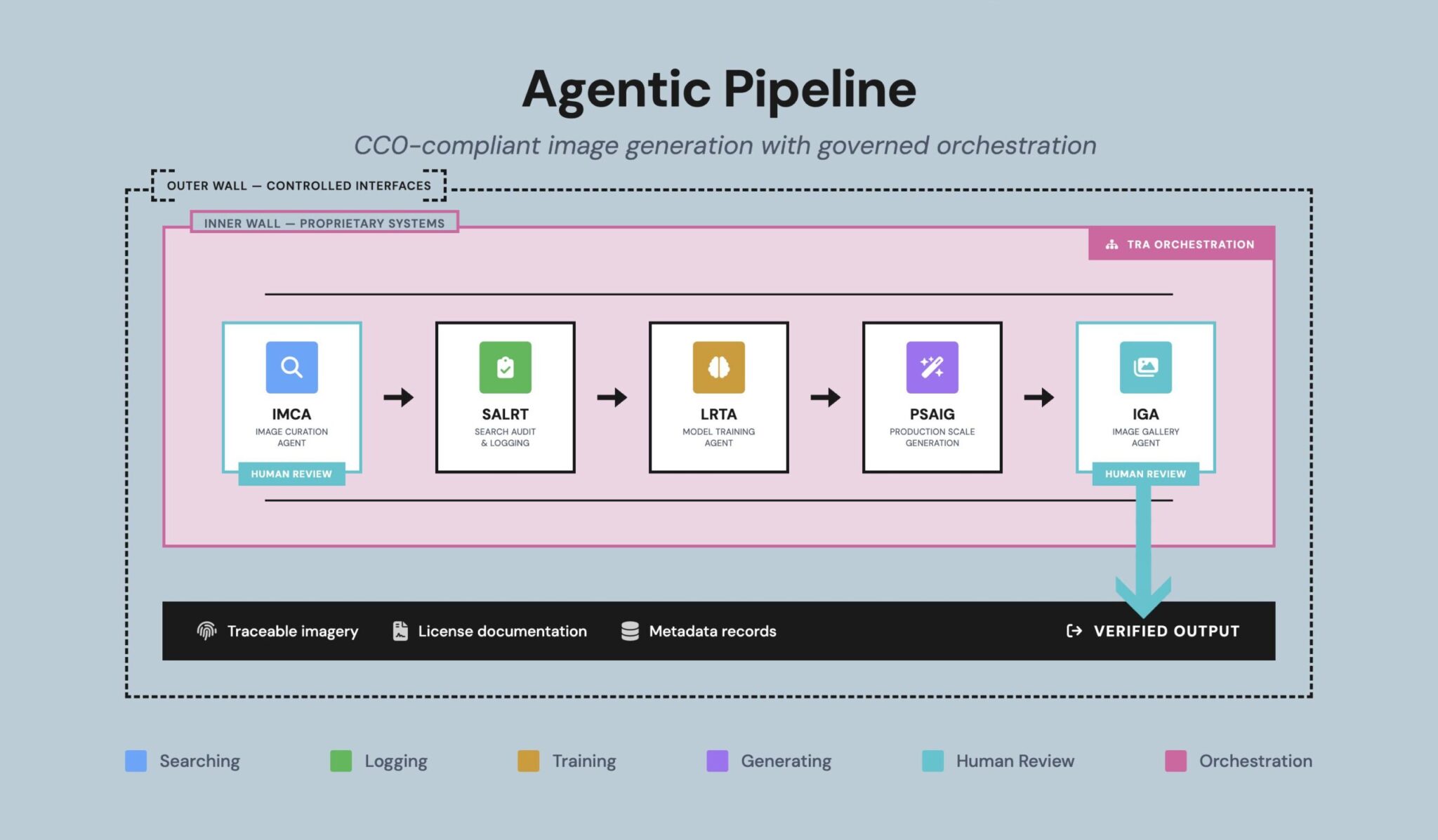

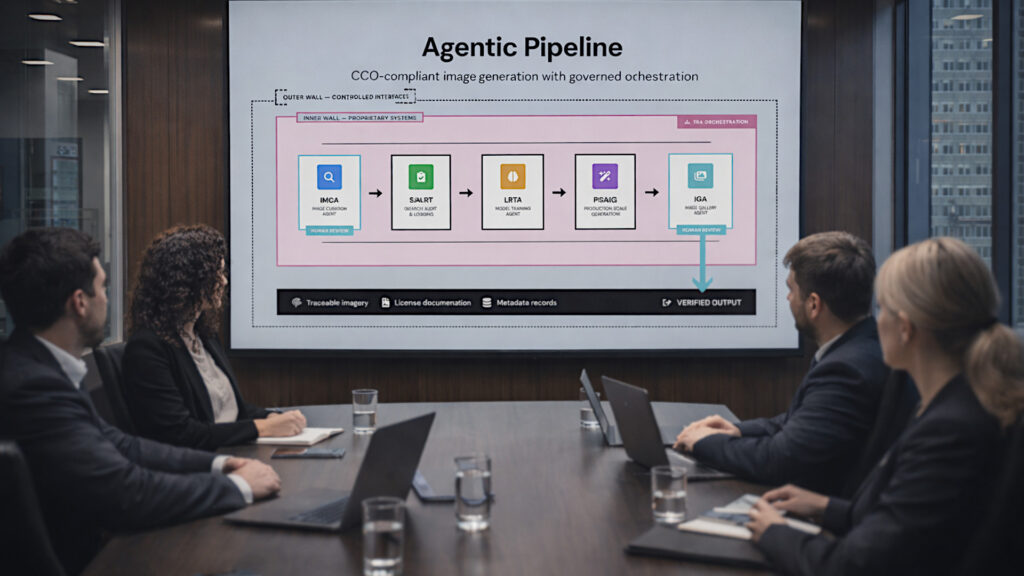

Consider a production pipeline for compliance-sensitive content. Rather than a single AI tool doing everything, the architecture employs dedicated agents for sourcing, verification, model training, generation, quality assurance, and delivery. Each agent operates within defined boundaries. Each produces records that downstream agents and human reviewers can inspect.

Agentic pipeline architecture: specialized agents with governed orchestration and human review gates. From Joe Skopek’s Financial Marketer article: “Marketing’s next frontier is autonomous networked intelligence.“

The orchestration agent functions as a traffic controller, routing work between agents based on status, priority, and pipeline rules. It does not make creative or compliance decisions. It enforces process. Human review gates are positioned at the points where judgment is irreplaceable–source curation and final output quality.

This is not theoretical architecture. Production systems built this way are operating today, handling thousands of assets through end-to-end pipelines where every step is logged, every input is traceable, and every output is defensible.

Trust You Can Demonstrate

In regulated environments, trust must be demonstrable rather than implied. Agentic systems are designed to produce clear, reviewable records of origin, licensing, and decision flow. Compliance discussions move away from subjective assurances and toward documented system behavior.

Every agent in the pipeline writes to a shared provenance record. When a sourcing agent identifies an asset, it logs the license type, the retrieval date, and the verification status. When a training agent builds a model, it records the dataset composition, the training parameters, and the lineage back to original sources. When a generation agent produces output, the full chain of custody is available on demand.

This matters because regulators do not ask whether your AI is good. They ask whether you can prove it did what you say it did. Agentic pipelines answer that question by design, not by retrofit.

Collaboration Without Exposure

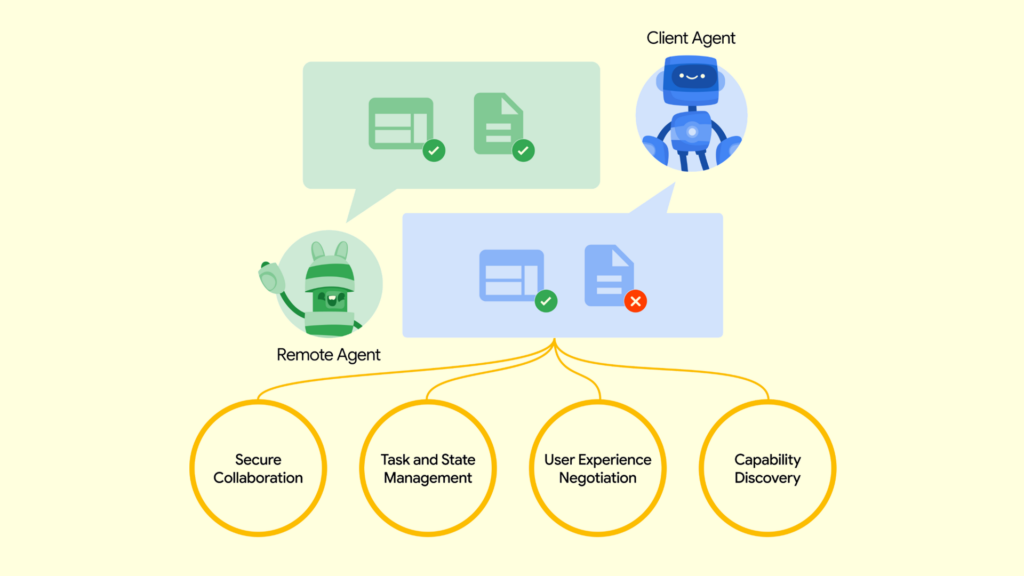

Financial services firms have historically avoided collaboration on models or data because the risk outweighed the benefit. Sharing training data exposes proprietary logic. Sharing models reveals competitive advantage. The default has been isolation.

Agentic architecture changes this calculation through what we call the Double Garden Wall. The inner wall protects proprietary datasets, screening logic, and brand-governance frameworks. These remain sealed and non-negotiable. The outer wall exposes only what external systems require: controlled capability interfaces, verifiable records, and traceable outputs.

Built this way, systems gain interoperability without dilution, collaboration without intellectual property leakage, and scale without compromising compliance.

Advances in distributed learning and controlled execution now allow verified partners to contribute capability without sharing raw data or proprietary logic. Agents can be registered in decentralized directories, verified against published capability specifications, and bound by enforceable policy contracts–all without exposing internal methods. Capability expands while risk remains bounded.

Parallel Workflows Without Parallel Headcount

Traditional AI pipelines execute sequentially. One step finishes before the next begins. Networked agentic systems enable multiple stages of work to operate concurrently across compatible agents. This event-driven, contract-based execution model allows firms to handle volume surges without linear increases in staffing or infrastructure.

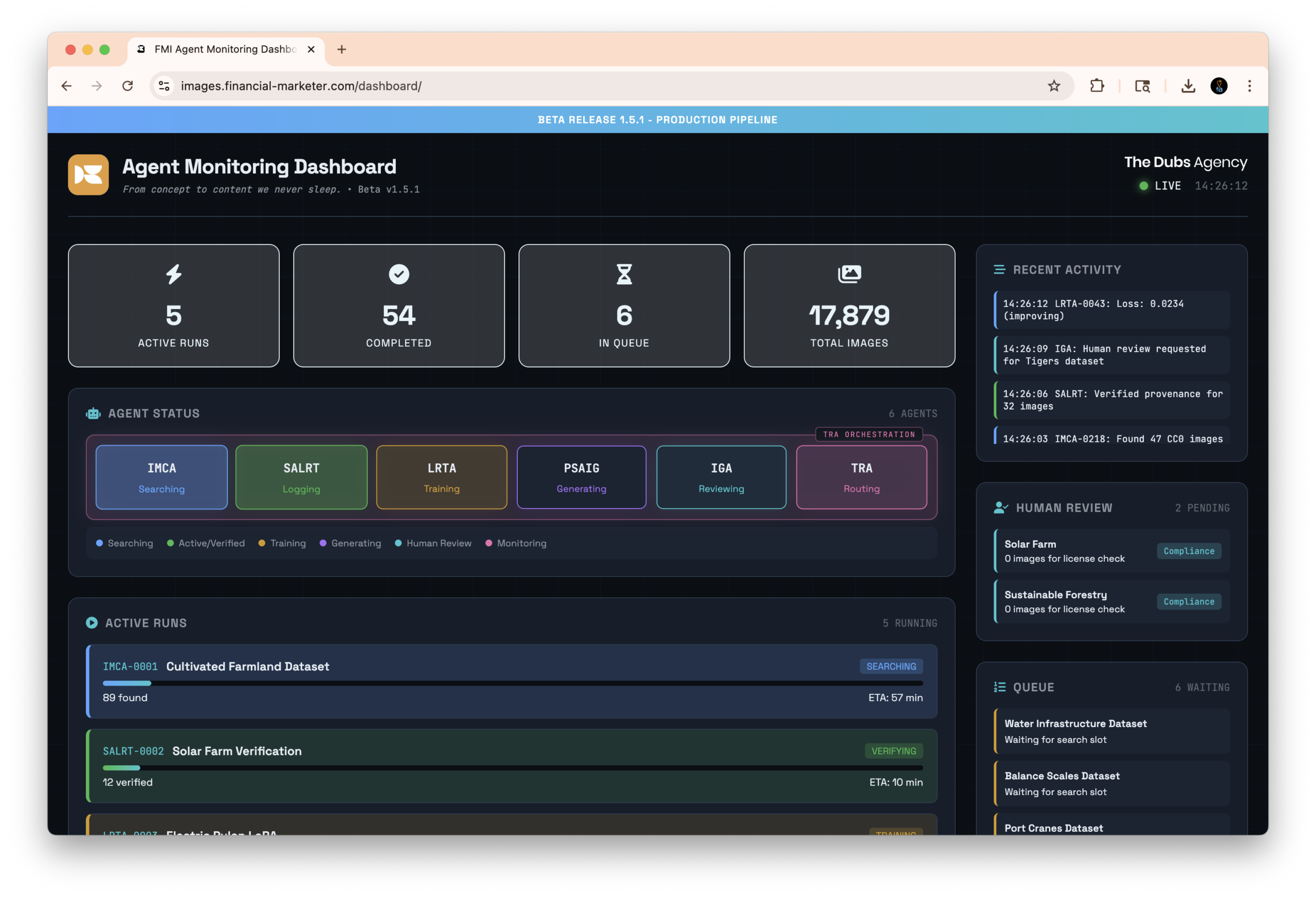

Agent orchestration and monitoring dashboard: real-time visibility into scalable concurrent pipeline operations.

A production monitoring dashboard shows the reality of this approach. Multiple agents operating simultaneously across sourcing, verification, training, and generation. Active runs with estimated completion times. Queue management for incoming work. Human review requests surfaced precisely when human judgment is needed–not before, and not after.

This is the operational difference between AI as a project and AI as infrastructure. Projects require constant management. Infrastructure runs, scales, and reports.

A Live Production Case

To make this concrete: a production-grade pipeline operating today generates CC0 (Creative Commons Zero) compliant imagery for regulated industries. The system employs specialized agents for sourcing, dataset preparation, model fine-tuning, production-scale generation, and gallery management. Governance is strict: public-domain inputs only, full chain-of-custody tracking, and aesthetic screening for accuracy and consistency.

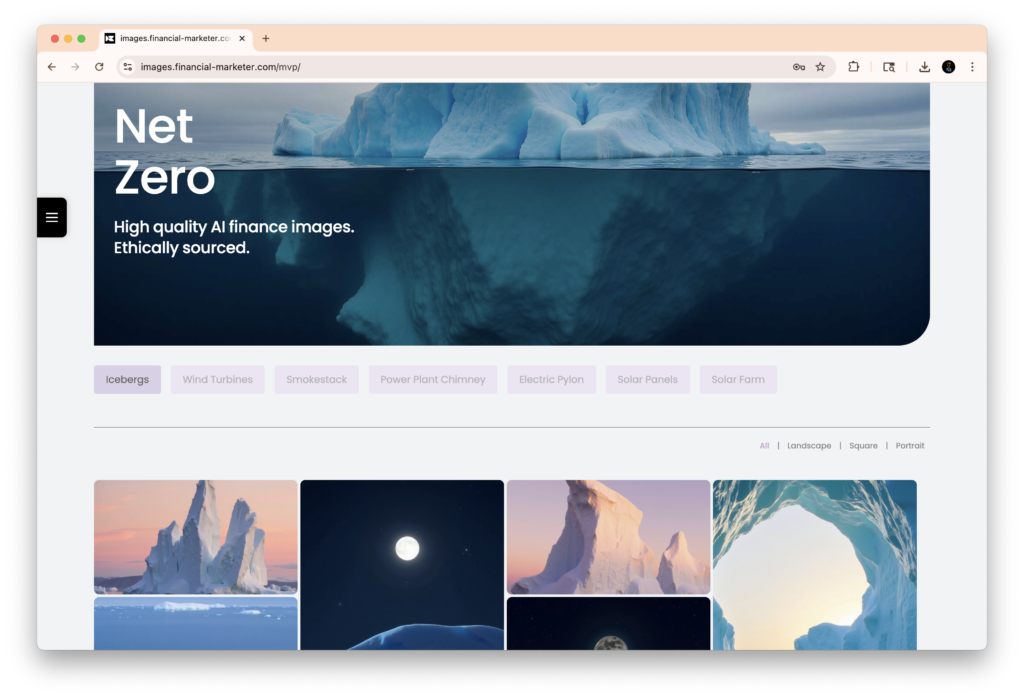

Membership image gallery with category-based organization, aspect ratio filtering, and curated industry-specific collections.

The output is not experimental. These are production assets used in client-facing materials where compliance review is mandatory. Each image can be traced back through the generation agent, through the model that produced it, through the training data that informed the model, back to the original public-domain source with full license documentation.

The system delivers assets in multiple aspect ratios–landscape, square, portrait–with metadata tagging for camera view, color palette, weather conditions, and semantic content. Every asset is available in tiered quality levels for different use cases, from full-resolution production to optimized web previews.

Once agents are registered, verified, and policy-bound, the pipeline enables controlled collaboration through decentralized registries, zero-trust interoperability where each agent governs its own exposure, distributed fine-tuning across verified compute without revealing private datasets, elastic job distribution across compatible agents, and production-scale auditability where every autonomous step leaves a clear record.

ELEVATOR PITCH:

Regulated industries need AI that produces auditable, compliant output at production scale. Agentic pipelines deliver this by orchestrating specialized AI agents through governed workflows where every action is logged, every source is traceable, and human judgment is preserved at the decisions that matter. The result is faster execution with stronger controls–not weaker ones.

Why the C-Suite Should Care

The value proposition is straightforward. Stronger controls. Faster output. Broader capability without compromising compliance posture. This is the difference between AI as a novelty and AI as operational infrastructure.

Financial services leaders should evaluate agentic systems against three uncompromising questions:

1. Can the system scale without weakening oversight?

2. Can every output withstand compliance, client, and regulator review?

3. As the firm grows, does the technology reinforce discipline–or fracture under pressure?

The industry does not need spectacle. It needs systems that behave predictably across volume spikes, regulatory cycles, and brand-governed workflows. When implemented with rigor, agentic AI is not about disruption. It is about operational reliability at a scale previously out of reach.

The firms that excel will not be those deploying the most colorful demonstrations. They will be the ones deploying systems that deliver controlled growth, verifiable governance, rapid execution, and credible audit trails.

The Challenge of Building in an Evolving Space

There is an honest tension in this work that deserves acknowledgment. The infrastructure layers that make agentic pipelines possible–agent discovery protocols, capability registries, policy enforcement standards–are still maturing. Building production systems on evolving foundations requires a specific kind of engineering discipline: design for what exists today while architecting for what arrives tomorrow.

This is not a reason to wait. The core principles – specialized agents, governed orchestration, traceable provenance, human gates at judgment points – are stable and proven. The interoperability layer that connects these systems across organizational boundaries is advancing rapidly through open standards and community-driven development.

What this means practically is that early movers gain compounding advantages. The organizations investing now in agentic infrastructure are building institutional knowledge, training teams, and establishing operational patterns that late adopters will spend years replicating. The learning curve is real, and it rewards those who start.

The shift toward networked agentic pipelines is already underway. The institutions that master it early will define the standard others are forced to follow.

THE BOTTOM LINE

Agentic pipelines are not about replacing human judgment. They are about automating every mechanical step between the moments where human judgment actually matters – and proving that the mechanical steps were executed correctly. For regulated industries, that combination of speed, scale, and verifiable compliance is not optional. It is the next operational baseline.

Velocity Ascent builds AI-powered solutions for regulated industries. We specialize in agentic solutions including; pipeline architecture, ethical AI sourcing, and production-scale automation with full provenance tracking.