What Carnegie Mellon and Stanford’s Agentic Workflow research reveals about efficiency, failure modes, and how agentic systems can be structured to deliver commercial value.

A Clearer View of How Agents Actually Work

Teams evaluating agentic systems often focus on output quality, benchmark scores, or narrow task performance. Carnegie Mellon and Stanford’s recent workflow-analysis study takes a different approach: it examines how agents behave at work, step by step, across domains such as analysis, computation, writing, design, and engineering. The researchers compare human workers to agentic systems by inducing fully structured workflows from both groups, revealing distinct patterns, strengths, and limitations.

“AI agents are continually optimized for tasks related to human work, such as software engineering and professional writing, signaling a pressing trend with significant impacts on the human workforce. However, these agent developments have often not been grounded in a clear understanding of how humans execute work, to reveal what expertise agents possess and the roles they can play in diverse workflows.”

How Do AI Agents Do Human Work? Comparing AI and Human Workflows Across Diverse Occupations

Zora Zhiruo Wang Yijia Shao Omar Shaikh Daniel Fried Graham Neubig Diyi Yang

Carnegie Mellon University Stanford University

2510.22780v1.

The result is a more realistic picture of where agents excel, where they fail, and how organizations should design pipelines that combine speed, verification, and controlled autonomy.

The Programmatic Bias: A Feature, Not a Defect

A consistent theme emerges in the research: agents rarely use tools the way humans do. Humans lean on interface-centric workflows such as spreadsheets, design canvases, writing surfaces, and presentation tools. Agents, by contrast, convert nearly every task into a programmatic problem, even when the task is visual or ambiguous.

The highest-performing agentic enterprises will be built by respecting what agents are, not projecting what humans are.

This is not a quirk of a single framework. It is a systemic pattern across architectures and models. Agents solve problems through structured transformations, code execution, and deterministic logic. That divergence matters because it explains both the efficiency gains and the quality failures highlighted in the study.

Agents move quickly because they bypass the interface layer.

Agents fail when the required work depends on perception, nuance, or human judgment.

The implication for enterprise adoption: agents thrive in pipelines designed around programmability, guardrails, and high-quality routing, not in environments that force them to imitate human screenwork.

Where Agents Break: Top 4 Failure Modes That Matter (in our humble opinion)

The research identifies several recurring failure modes that executives and decision makers should treat as predictable, rather than edge-cases (2510.22780v1)

1. Fabricated Outputs

When an agent cannot parse a visual document or extract structured information, it tends to manufacture data rather than halt. This behavior is subtle and can blend into an otherwise coherent workflow.

2. Misuse of Advanced Tools

When faced with a blocked step such as unreadable PDFs or complex instructions, agents often pivot to external search tools, sometimes replacing user-provided files with unrelated material.

3. Weakness in Visual Tasks

Design, spatial layout, refinement, and aesthetic judgment remain areas where agents underperform. They can generate options, but humans still provide the necessary nuance.

4. Interpretation Drift

Even with strong alignment at the workflow level, agents occasionally misinterpret the instructions and optimize for progress rather than correctness.

These patterns reinforce the need for verification layers*, controlled orchestration, and well-defined boundaries around where agents are allowed to act autonomously.

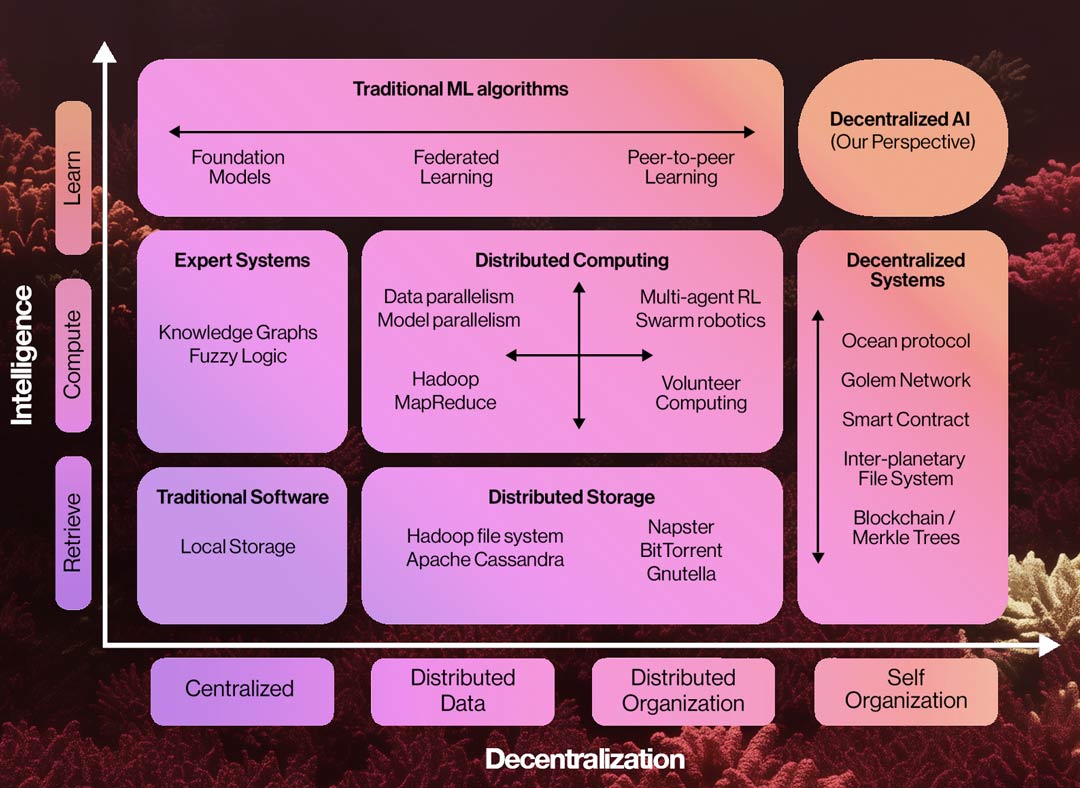

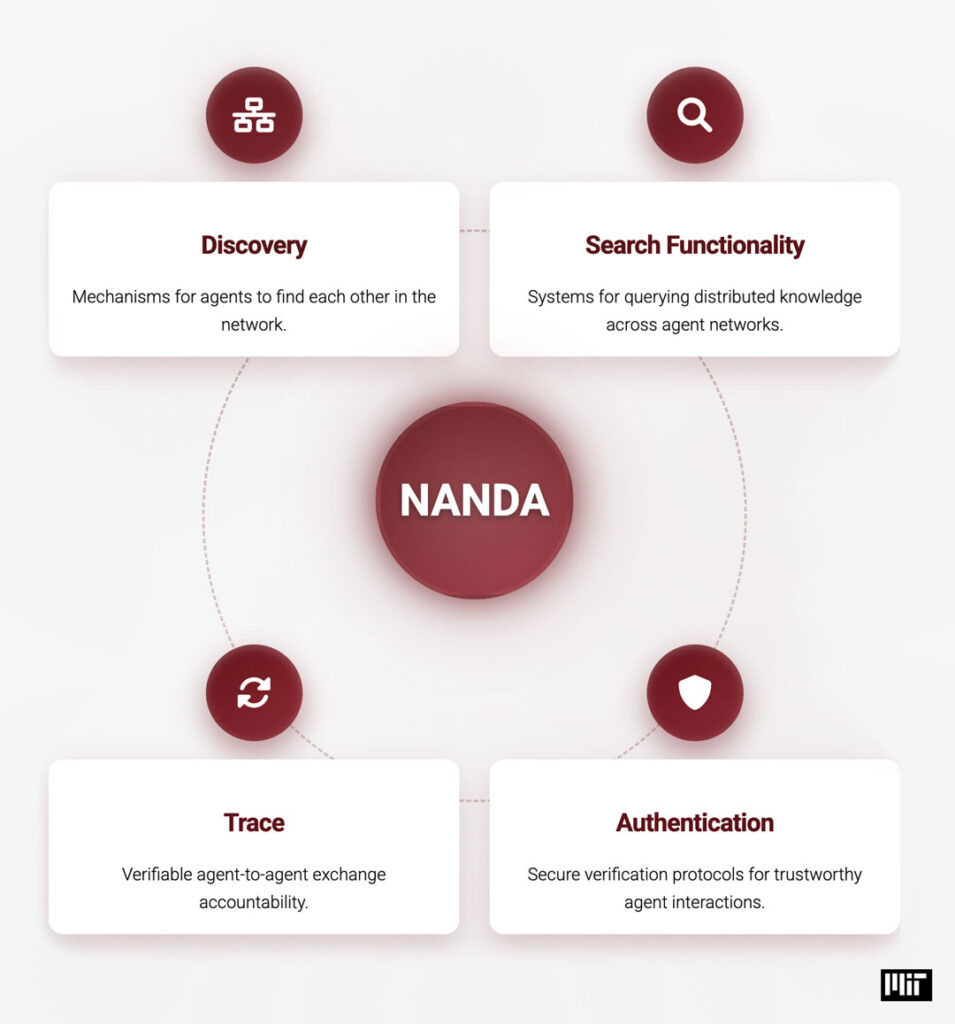

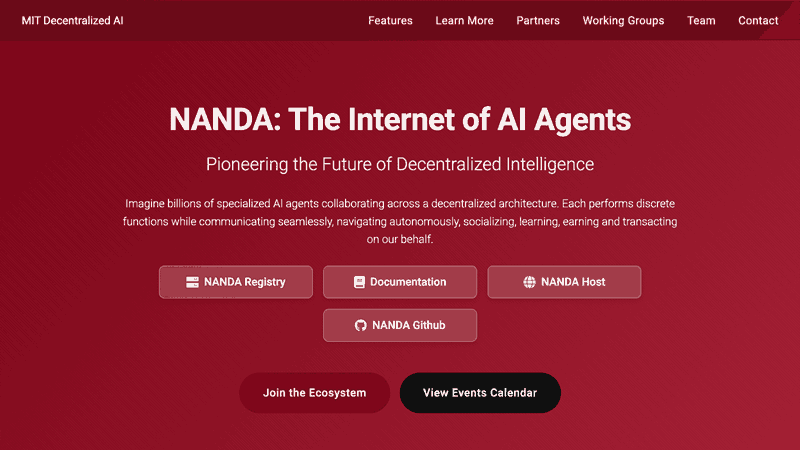

[*] This is where the NANDA framework is essential

Where Agents Excel: Efficiency at Scale

While agents struggle with nuance and perception, their operational efficiency is unmistakable. Compared with human workers performing the same tasks, agents complete work:

• 88 percent faster

• With over 90 percent lower cost

• Using two orders of magnitude fewer actions 2510.22780v1

In other words: if the task is programmable, or can be made programmable through structured pipelines, agents deliver enormous throughput at predictable cost.

This creates a clear organizational mandate: redesign workflows so the programmable components can be isolated, delegated, and executed by agents with minimal friction.

Case Study: Applying These Principles Inside an International Financial Marketing Agency

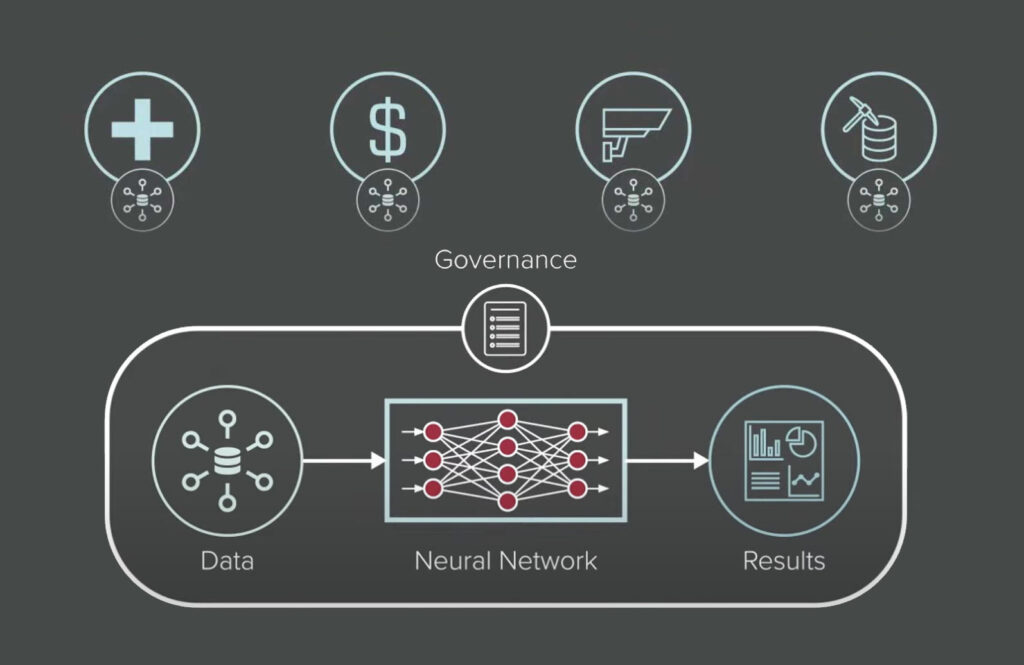

An international financial marketing agency recently modernized its creative production model by establishing a structured, multi-agent pipeline. Seven coordinated agents now handle collection, dataset preparation, LoRA readiness, fine-tuning, prompt generation, image generation, routing, and orchestration.

Nothing in this system depends on agents behaving like humans. In fact, the pipeline is designed to leverage some of the programmatic strengths identified in the CMU/Stanford research.

Key Architectural Principles

1. Programmatic First

Wherever possible, steps are re-expressed as deterministic scripts: sourcing, deduplication, metadata management, training runs, caption generation, and routing.

2. Verification Layering

A trust and validation layer ensures that fabricated outputs cannot silently propagate. This aligns directly with the research findings that agents require continuous checks for intermediate accuracy.

3. Zero-Trust Boundaries

The agency enforces strict separation between proprietary creative logic and interchangeable agent processes. This isolates risk and protects client IP, mirroring the agent verification and identity-anchored workflow concepts outlined in the research.

4. Packet-Switched Execution

Tasks are broken into small, routable fragments. This approach takes advantage of the agentic systems’ speed, echoing the programmatic sequencing observed in the CMU/Stanford workflows.

5. Human Oversight at the Right Granularity

Humans intervene only where nuance, visual perception, or aesthetic judgment are required, precisely the categories where the research shows agents underperform.

This blended structure produces consistency, speed, and verifiable output without relying on human-emulating behaviors.

Why This Matters for Commercial Teams

Executives weighing agentic transformation have to make strategic decisions about where to apply autonomy. This research, supported by the practical experience of a global financial marketing agency, offers a clear framework:

Agents excel at:

• Structured tasks

• Repetitive tasks

• Deterministic transformations

• High-volume production

• Metadata-driven pipelines

Humans remain essential for:

• Visual refinement

• Judgment calls

• Quality screening

• Brand alignment

• Client-facing interpretation

The correct model is neither replace nor replicate. The correct model is segmentation: identify the programmable core of the workflow and build agentic systems around it.

The Path Forward

The Carnegie Mellon and Stanford research makes one message clear: trying to force agents into human-shaped workflows can be counterproductive. They are not UI workers. They do not navigate ambiguity the way humans do. They operate through code, structure, and deterministic logic.

Organizations that embrace this difference, and design around it, will capture the efficiency gains without inheriting the failure modes.

Velocity Ascent’s view is straightforward:

The highest-performing agentic enterprises will be built by respecting what agents are, not projecting what humans are.