The Convergence of Edge Infrastructure, Operational Supervision, and Autonomous Systems.

Most discussions around AI focus on models.

Most discussions around IoT focus on devices.

But a quieter and potentially more important shift is occurring underneath both industries: the separation of operational intelligence from proprietary hardware.

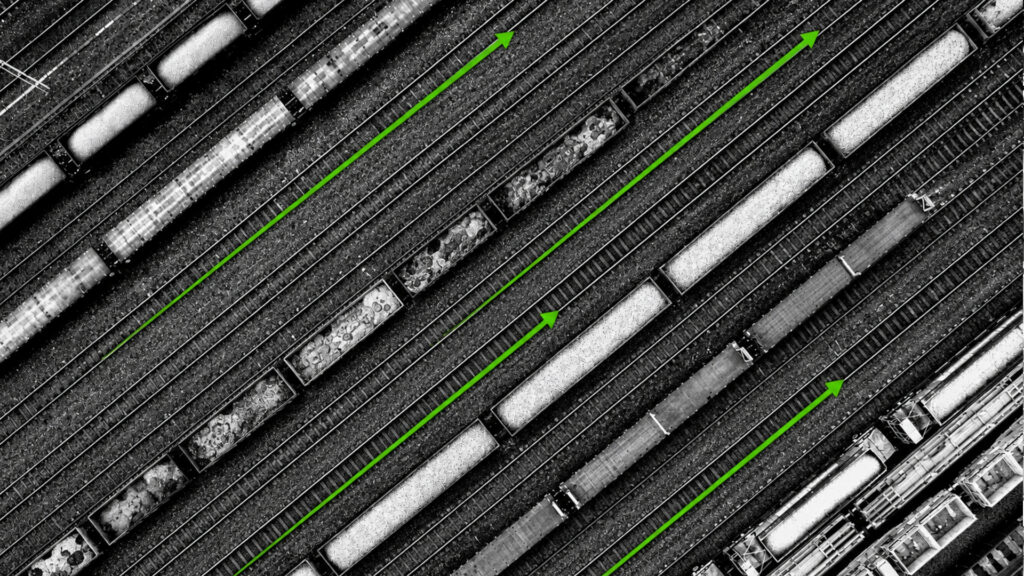

Across industrial automation, distributed computing, edge AI, and agentic systems, organizations are beginning to adopt hardware-agnostic orchestration layers capable of supervising and deploying workloads across highly heterogeneous environments. That means software intelligence can increasingly move independently of the physical infrastructure beneath it.

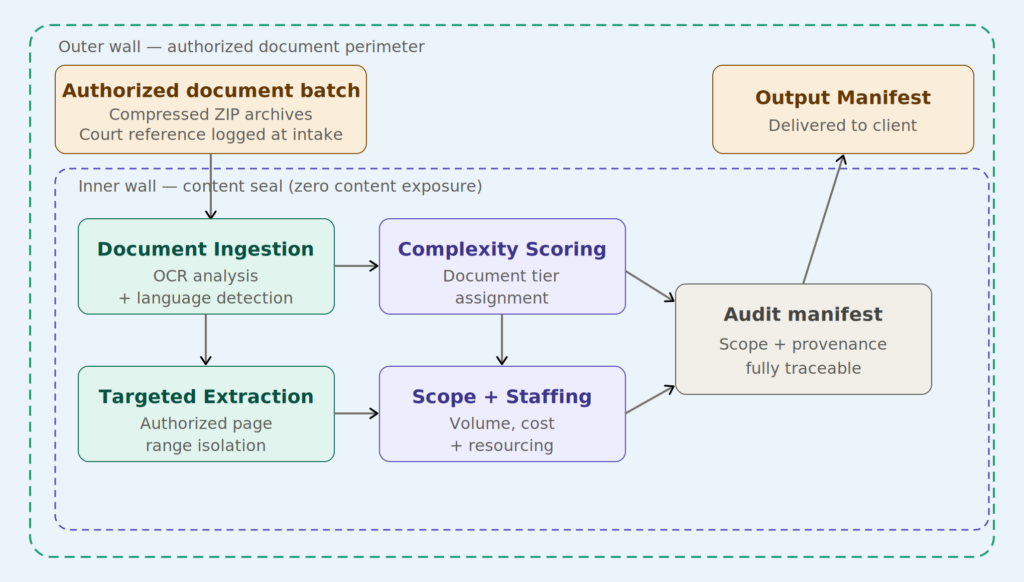

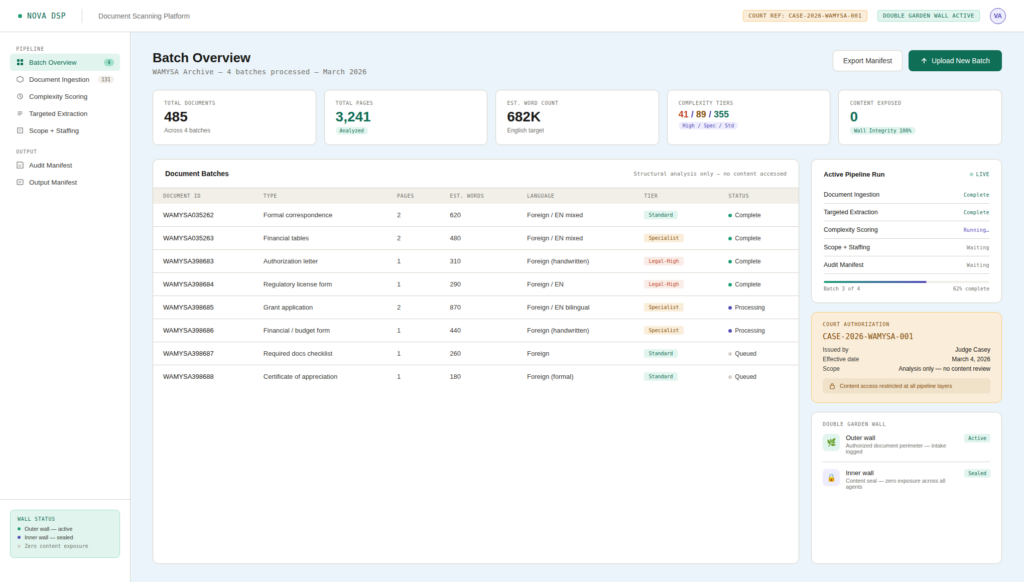

Velocity Ascent designs agentic and edge-supervised systems that maximize what AI can do autonomously across cloud, edge, and operational environments – while remaining precisely aligned with the legal, regulatory, security, and compliance requirements specific to each client’s infrastructure and industry context.

This is not merely a technical optimization. It is becoming an operational strategy. The same architectural principles now driving industrial edge modernization are also beginning to shape the future of agentic AI systems.

The Shift From Fixed Hardware to Portable Intelligence

For decades, industrial and operational systems were built around tightly integrated hardware stacks. PLCs, SCADA systems, embedded controllers, and industrial gateways were often deeply tied to specific vendors and deployment models. Expanding or modernizing those environments typically required significant infrastructure replacement and operational disruption.

That model is beginning to erode.

Modern edge platforms are moving toward software-defined operations where orchestration, supervision, and intelligence exist independently from the underlying hardware layer. Applications are increasingly containerized. Workloads are portable. Infrastructure is abstracted. Supervision is centralized.

This creates operational flexibility that older architectures were never designed to support.

An intelligent workload that once depended on a specific physical appliance can now move between hardware environments with minimal reconfiguration. AI inference can execute locally at the edge rather than relying exclusively on centralized cloud infrastructure. Operational systems can scale horizontally across fleets of devices rather than vertically through increasingly expensive proprietary infrastructure.

In industrial environments, this transition is often described through concepts such as virtual PLCs, edge-native SCADA, and software-defined automation.

In AI infrastructure, the same trend is beginning to emerge through distributed agentic systems.

The Convergence Between Industrial Edge and Agentic AI

At first glance, industrial automation and agentic AI appear to belong to separate categories.

In practice, they are beginning to solve remarkably similar problems.

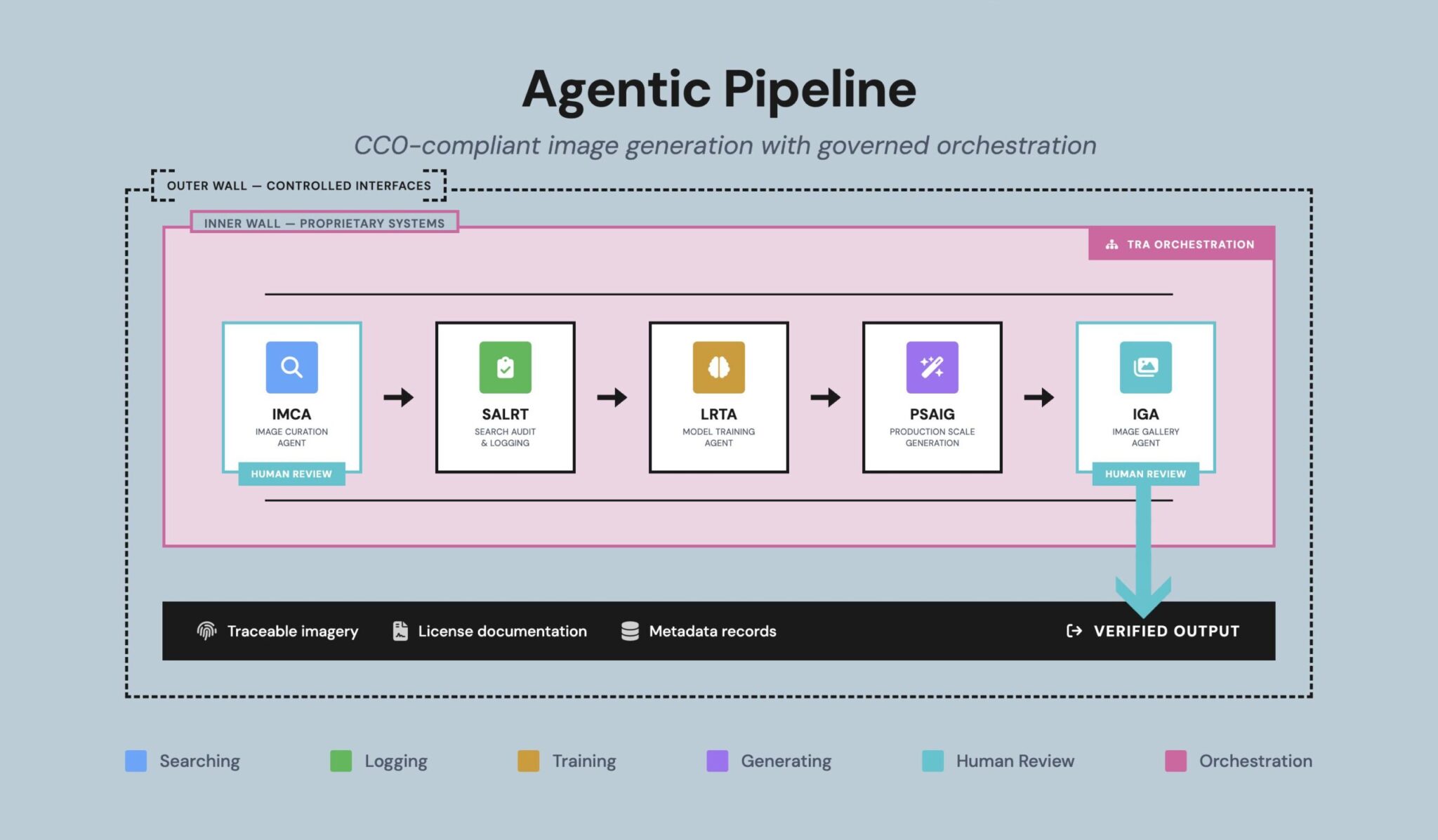

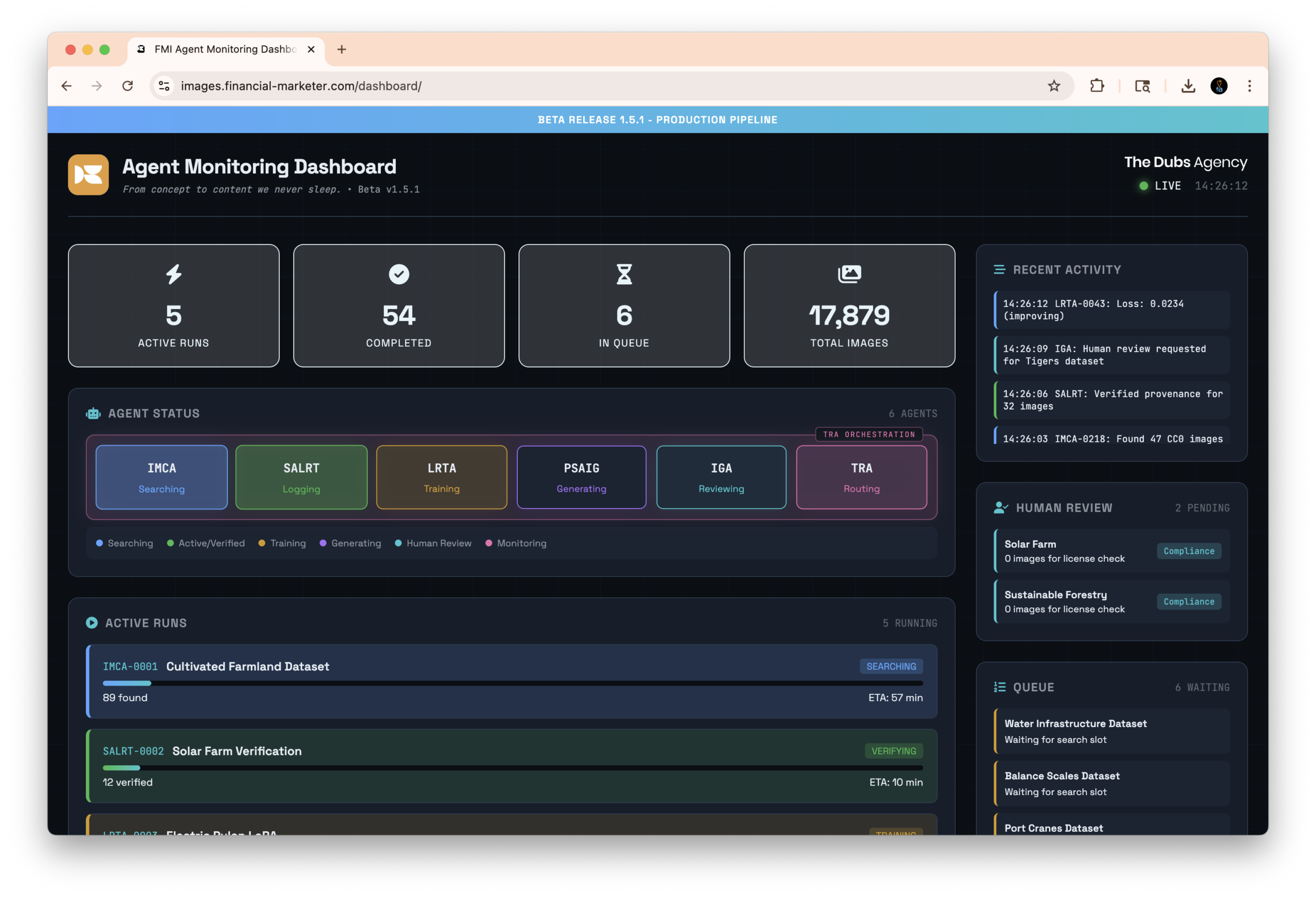

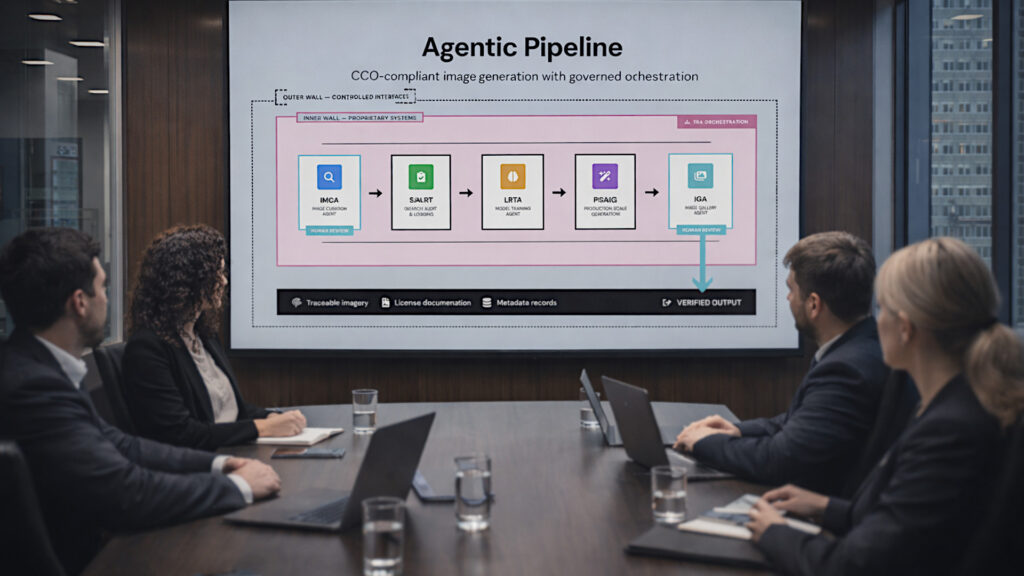

Modern agentic systems increasingly operate as distributed execution environments rather than standalone applications. Multiple agents coordinate asynchronously across different systems and contexts. Some workloads execute locally. Others route through centralized orchestration layers. Human approval gates, telemetry systems, policy enforcement, audit trails, and workload supervision become operational necessities rather than optional features.

This starts to resemble industrial operational infrastructure more than conventional software.

The challenge is no longer simply generating outputs from a model. The challenge becomes supervising a distributed network of intelligent processes operating across heterogeneous environments while maintaining reliability, governance, and operational traceability.

That is precisely the category of problem modern edge orchestration platforms were designed to address.

Edge orchestration platforms were originally built to solve operational complexity in large distributed environments. Imagine a utility company with thousands of remote devices, substations, sensors, industrial controllers, and localized compute nodes spread across multiple regions.

Those systems need software updates, security policies, telemetry collection, workload deployment, fault monitoring, rollback capability, and centralized operational oversight – often without direct human interaction onsite.

Hardware-agnostic orchestration platforms create a supervisory layer that manages all of this regardless of the underlying hardware vendor or device architecture.

Agentic AI systems are beginning to encounter many of the same operational realities. Instead of supervising physical machines alone, organizations are increasingly supervising distributed networks of AI agents, inference workloads, localized automation systems, and policy-bound execution environments.

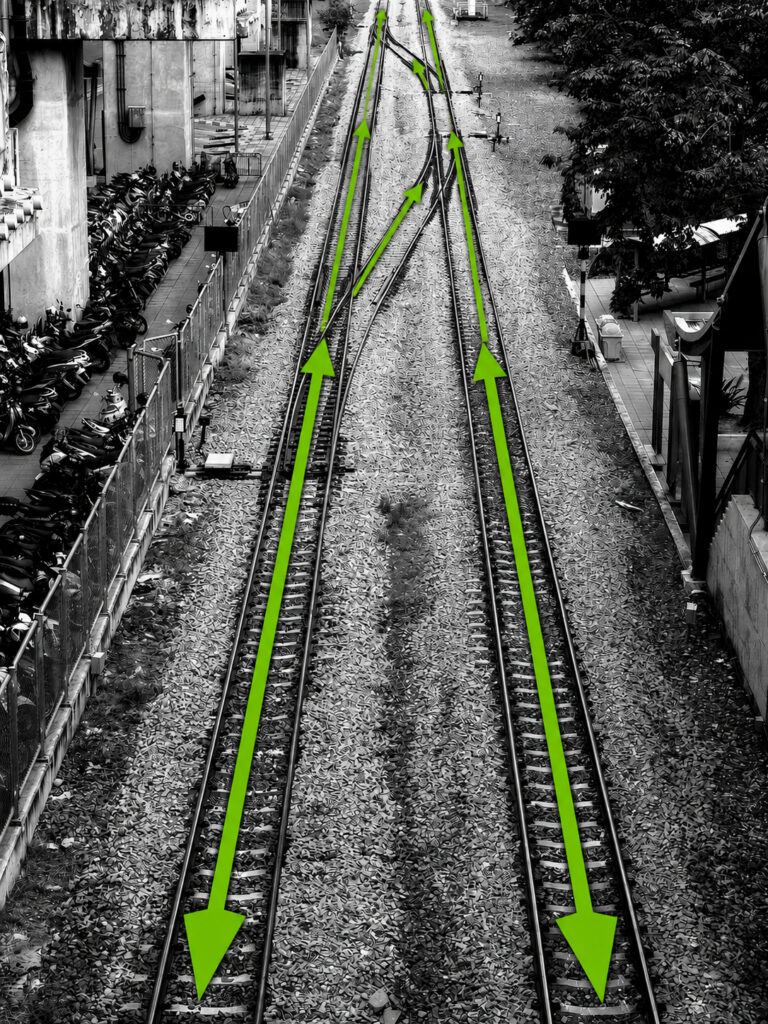

Industrial edge systems are becoming increasingly software-native.

Agentic AI systems are becoming increasingly infrastructure-native.

The two worlds are beginning to converge.

Why Hardware Abstraction Matters

One of the largest operational challenges in both IoT and distributed AI systems is fragmentation.

Organizations rarely operate in uniform environments. Infrastructure accumulates over time. Different vendors, device architectures, operating systems, networking conditions, and deployment constraints create operational complexity that scales rapidly as environments grow.

Hardware-agnostic orchestration attempts to solve this by abstracting the underlying infrastructure.

Instead of building operational logic around a specific hardware platform, organizations manage workloads through centralized software layers capable of deploying and supervising workloads across many device types simultaneously.

This creates several important operational advantages.

First, infrastructure becomes more portable. Organizations can evolve hardware strategies without rewriting entire operational systems.

Second, deployment velocity increases. New edge devices can often be provisioned remotely through zero-touch deployment models rather than requiring extensive manual configuration.

Third, operational resilience improves. Distributed workloads can shift between environments when hardware fails or conditions change.

Finally, AI deployment becomes far more practical at scale. Inference workloads can operate locally where latency, bandwidth, privacy, or regulatory constraints make centralized cloud execution undesirable.

These are not theoretical concerns. They are increasingly becoming operational requirements.

The Companies Driving the Shift

Several vendors have emerged as significant players in this space, though they tend to approach the market from different angles.

Companies such as ZEDEDA and SUSE Industrial Edge focus heavily on cloud-native edge orchestration and large-scale fleet supervision. Their platforms emphasize Kubernetes-native deployment models, lifecycle management, and infrastructure abstraction across highly distributed environments.

Other firms, including Barbara and Mutexer, are more focused on industrial modernization. Their work centers around OT/IT convergence, software-defined automation, edge-native control systems, and reducing dependency on tightly coupled industrial hardware stacks.

Meanwhile, platforms such as Clea by SECO and FairCom Edge emphasize embedded systems, telemetry, OTA lifecycle management, and lightweight edge AI deployment.

Open-source ecosystems are also playing a significant role. Projects including KubeEdge, Open Horizon, and EdgeX Foundry are increasingly attractive for organizations prioritizing vendor neutrality, sovereign infrastructure strategies, or air-gapped deployments.

What connects all of these efforts is the same underlying objective: operational intelligence that is portable, distributed, and infrastructure-flexible.

Why the C-Suite Should Care

Most enterprises are entering a period where operational systems will become increasingly hybrid.

Some workloads will remain centralized in the cloud. Others will execute at the edge. Some environments will remain air-gapped due to regulatory or operational requirements. Legacy infrastructure will continue to coexist alongside modern AI-native systems for years, if not decades.

This creates a strategic problem.

Organizations that tightly couple intelligence to proprietary infrastructure may find themselves operationally constrained precisely when flexibility becomes most important.

The larger issue is not simply cost or modernization. It is adaptability.

As distributed AI systems mature, operational supervision becomes increasingly critical. The conversation shifts away from model novelty and toward execution reliability, governance enforcement, auditability, deployment consistency, and infrastructure resilience.

In regulated industries especially, distributed intelligence without operational traceability quickly becomes a liability.

This is why hardware-agnostic orchestration matters beyond engineering teams.

It represents a foundational layer for managing distributed operational intelligence at enterprise scale.

THE BOTTOM LINE

The Emerging Direction

The long-term trajectory is becoming increasingly visible.

Industrial systems are becoming more software-defined.

Edge infrastructure is becoming more cloud-native.

AI systems are becoming more distributed.

Agentic systems are becoming operational infrastructure.

The organizations preparing for this shift are not merely modernizing hardware environments. They are building the supervisory and orchestration layers capable of managing intelligent systems across increasingly complex operational landscapes. That infrastructure layer may ultimately become as important as the models themselves.

Velocity Ascent builds AI-powered systems for regulated and operationally sensitive industries. We specialize in agentic infrastructure, edge-supervised AI pipelines, and distributed orchestration environments designed to maximize autonomous capability while maintaining governance, traceability, security, and operational control across cloud, edge, and industrial systems.

Core Concepts — A Plain-English Overview

What Are Industrial Edge Systems?

Industrial edge systems are localized computing environments positioned close to physical operations rather than inside centralized cloud infrastructure.

Instead of sending every signal, command, or sensor reading back to a distant data center, edge systems process information near the source of activity. This reduces latency, improves resilience, and allows operations to continue even when connectivity to the cloud is interrupted.

Examples include:

- factory floor automation systems

- utility monitoring infrastructure

- logistics and warehouse operations

- transportation systems

- energy infrastructure

- oil and gas facilities

In many cases, these environments operate continuously and require high reliability, low latency, and strict operational oversight.

What Are Agentic AI Systems?

Agentic AI systems are AI environments where software agents perform semi-autonomous or autonomous tasks on behalf of users or organizations.

Rather than generating a single response like a traditional chatbot, agentic systems may:

- retrieve information

- make decisions

- coordinate with other agents

- trigger workflows

- monitor systems

- generate outputs

- request approvals

- execute operational tasks

A mature agentic system behaves less like a standalone application and more like a distributed operational workforce composed of specialized digital actors operating under rules, permissions, and supervisory controls.

What Are IoT Systems?

IoT stands for “Internet of Things.”

IoT systems connect physical devices to digital networks so they can collect, transmit, receive, and act upon data.

Examples include:

- environmental sensors

- smart meters

- connected industrial machinery

- surveillance systems

- wearable devices

- fleet tracking hardware

- building automation systems

The core idea behind IoT is that physical infrastructure becomes digitally observable and, increasingly, digitally controllable.

What Is Hardware-Agnostic Edge Control Software?

Hardware-agnostic edge control software acts as a supervisory layer that can manage distributed systems regardless of the underlying hardware manufacturer.

Traditionally, many operational systems were tied directly to proprietary hardware ecosystems. Modern orchestration platforms abstract those hardware differences so workloads can move between environments more easily.

This allows organizations to:

- deploy workloads across mixed hardware fleets

- centrally supervise distributed systems

- remotely update software

- scale operations without vendor lock-in

- standardize governance and security policies

- run AI workloads across diverse environments

In simple terms, it allows software intelligence to become more portable than the hardware beneath it.

What Are PLCs and SCADA Systems?

PLCs and SCADA systems are foundational technologies used in industrial operations.

PLC stands for Programmable Logic Controller.

These are rugged industrial computers designed to control machinery and operational processes in environments such as factories, utilities, and infrastructure facilities.

SCADA stands for Supervisory Control and Data Acquisition.

SCADA systems provide centralized visibility and supervision over industrial environments. They collect telemetry, display operational status, trigger alerts, and allow operators to monitor or control distributed systems.

Historically, PLCs and SCADA environments were highly proprietary and tightly coupled to specific hardware vendors.

Modern edge orchestration platforms are increasingly attempting to virtualize and modernize these environments through software-defined approaches.

Leading vendors: hardware-agnostic edge control, orchestration, and supervision software

A few companies consistently come up as leaders in hardware-agnostic edge control, orchestration, and supervision software – especially for industrial automation, IIoT, edge AI, and distributed operations.

Here are some of the current strongest players by category as defined in our research:

| Provider | Core Focus | Strengths | Typical Customers |

|---|---|---|---|

| ZEDEDA | Edge orchestration & lifecycle management | Strong hardware abstraction, zero-touch deployment, Kubernetes/VM support | Industrial, retail, telecom, energy |

| Barbara | Industrial edge AI platform | OT/IT convergence, container orchestration, broad protocol support | Utilities, manufacturing, energy |

| SUSE Industrial Edge | Industrial edge infrastructure | Kubernetes-native, GitOps workflows, scalable fleet ops | Enterprise industrial operations |

| Clea by SECO | Full-stack edge/IoT framework | Hardware-agnostic orchestration, OTA, AI deployment | OEMs, embedded systems vendors |

| Eclipse ioFog | Open-source EdgeOps | Distributed workload orchestration, air-gapped deployments | Defense, industrial, research |

| FLECS | Industrial software layer | Software-defined automation environments | Machine builders, automation OEMs |

| FairCom Edge | Industrial data integration | OT protocol translation, edge persistence, telemetry | Manufacturing, utilities |

| Mutexer | Virtual PLC / SCADA platform | Software-defined controls on generic Linux hardware | Modern industrial automation teams |

A few observations about the market:

- The market is converging around Kubernetes + containerized edge orchestration.

- “Hardware agnostic” usually means:

- ARM/x86 compatibility

- support for NVIDIA Jetson, Intel, Raspberry Pi, industrial IPCs

- virtualization/container portability

- independence from proprietary PLC hardware

- The biggest differentiator is often whether the platform focuses on:

- IT-style edge orchestration (ZEDEDA, SUSE, ioFog)

- industrial OT control systems (Barbara, Mutexer, FLECS)

- IoT/telemetry infrastructure (Clea, FairCom)

For industrial control specifically, the most interesting trend is the move toward:

- virtual PLCs (vPLC)

- software-defined automation

- edge-native SCADA

- centralized fleet supervision

- AI-assisted operations at the edge

That’s why newer vendors like Mutexer and Barbara are getting attention: they’re trying to replace traditional tightly coupled PLC/SCADA stacks with portable software layers.

Meanwhile, ZEDEDA has become one of the most recognized “horizontal” edge orchestration platforms because it abstracts heterogeneous edge hardware and supports large-scale distributed management. (ZEDEDA)

Open-source ecosystems are also important:

These tend to be favored where:

- vendor neutrality matters

- air-gapped deployments are required

- organizations want to avoid cloud lock-in

One useful way to think about the competitive landscape is:

- Cloud-native edge infrastructure

- ZEDEDA

- SUSE

- ioFog

- Industrial automation modernization

- Barbara

- FLECS

- Mutexer

- Embedded/IoT edge platforms

- Clea

- FairCom

- AI-centric edge orchestration

- Barbara

- Clea

- TwinEdge